It’s almost exactly a year since we launched this newsletter and began writing our book. Earlier this week, we turned in our manuscript to our publisher! It’s now in the hands of peer reviewers.

In the book, we dig into the ideas behind generative AI, tackle fears around artificial general intelligence, explain the scientific and ethical limitations of predictive AI, categorize the many types of AI harms, explore why AI has failed to fix social media, analyze how AI hype and misinformation are created and amplified, and argue that many AI problems in fact reflect problems with capitalism requiring deeper reforms. While these topics are largely the same as the newsletter, there’s very little overlap in the content.

Publishing doesn’t move as fast as AI does; the book will be out some time in 2024. Meanwhile, we’re giving talks, especially virtually, based on what we learned while researching the book.

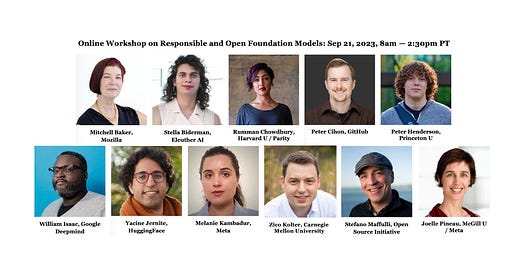

On September 21, we’re co-organizing a virtual workshop on responsible development of open approaches to AI, along with Rishi Bommasani and Percy Liang at Stanford. We’re pleased to have an exceptionally knowledgeable cast of speakers spanning AI, open-source software, policy, and the intersections of these areas. Register for the Zoom link.

A group of us will follow up the workshop with a collaborative paper proposing guardrails and laying out research questions for open foundation models.

Yesterday, the two of us were named on TIME magazine’s list of 100 (actually 103) most influential people in AI. Of course, lists like this are pretty arbitrary, but we’re glad that this newsletter put us on the map, and that we’ve been able to do it without curtailing our research. Here’s a short interview with us. Thank you to our 13,000 subscribers!

Congrats! Enjoy reading your writing, so keep going!

Excited to see you getting this well-deserved recognition and hope to see you both soon!